Online meetings now drive daily work across the world. Teams talk through video platforms every day. Clear sound helps people share ideas and solve problems fast. Yet many meetings still suffer from weak audio. Background noise and echo break the flow of conversation.

Studies from the organization show that more than 300 million people join online meetings each day.

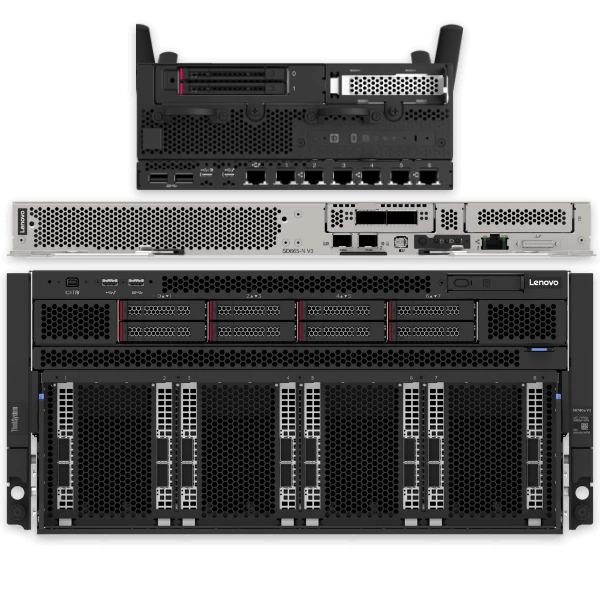

That number keeps growing each year. As remote work rises, people need better sound quality. Smart audio technology inside modern computers now solves this problem. Many systems now include advanced audio AI features. These tools run inside a powerful AI desktop PC. The system studies sound in real time. It then improves voice clarity during calls.

So today, we’ll outline how smart AI PC audio improves online meeting sound.

1. Smart Noise Reduction Removes Background Distractions

Background noise often ruins a meeting's sound. Keyboards click. Fans run. Street sounds enter the microphone. These sounds confuse listeners and reduce speech clarity. Smart audio AI now solves this issue. The system studies sound patterns and separates the human voice from noise. It filters unwanted sounds instantly.

AI hardware integrated into AI desktop PCs is used in many contemporary systems to process this task. The processor runs deep learning models that identify voice signals. Once the system detects noise, it reduces the sound level. At the same time, it protects the human voice signal. This keeps speech natural and clear.

These systems detect noise sources such as

- Keyboard typing

- Fan noise

- Office chatter

- Street traffic

- Air conditioner hum

2. Voice Isolation Focuses on the Main Speaker

Online meetings often include many voices in one room. Sometimes several people talk near the same microphone. Standard audio systems capture all sounds together. This makes the speech unclear. AI audio technology isolates the main speaker. It identifies the strongest voice signal and prioritizes it. The system then lowers other sounds.

How AI Voice Detection Works

Audio AI uses machine learning models trained on thousands of speech samples. These models recognize voice frequency patterns. They detect pitch rhythm and speech tone. Once the system finds the speaker, it strengthens that signal. At the same time, it lowers background voices.

This process runs instantly inside a modern AI PC. Dedicated neural processors handle the workload without slowing the computer. The result feels natural during meetings. Participants hear one clear voice rather than many mixed sounds.

3. Echo Cancellation Keeps Conversations Clear

Echo creates one of the most frustrating meeting problems. It occurs when speakers pick up sound from their own microphone. The delayed sound travels back into the call. This causes repeating voices that distract listeners. Smart AI audio solves this issue through echo cancellation technology.

AI Echo Control Improves Sound Flow

The system monitors audio output and microphone input at the same time. It compares both signals using AI algorithms. If the system detects repeated sound, it removes the duplicate signal before sending it to other participants.

This process happens instantly, so users never hear the echo. Modern meeting platforms depend on this feature when people use external speakers or large displays.

Hardware acceleration inside an AI desktop PC allows this process to run continuously without delay. Clear audio helps conversations move naturally from one speaker to another.

4. Automatic Volume Control Balances Every Voice

Not every meeting participant speaks at the same volume. Some voices sound quiet while others sound loud. This forces listeners to adjust speaker volume many times during the call. Smart AI audio solves this challenge through automatic volume control.

The system measures voice loudness in real time. It then adjusts audio levels so every voice stays balanced. This technology creates a stable listening experience.

Benefits of automatic voice balancing include

- Clear speech during group discussions.

- Consistent volume across speakers.

- Reduced need for manual adjustments.

- Better listening comfort during long meetings.

The AI processor inside an AI PC performs this task constantly during calls. The system studies sound waves every second. It then applies small adjustments to maintain equal volume levels.

This creates a professional meeting sound experience. Participants hear every word clearly without sudden changes in audio levels.

5. Beamforming Microphones Capture Voice Direction

Modern AI audio systems also improve microphone accuracy. Traditional microphones capture sound from every direction. This includes noise from around the room.

Beamforming technology changes how microphones capture sound. It uses several microphones that work together as one system. These microphones detect the direction of the speaker.

Directional Audio Improves Meeting Focus

AI algorithms study the sound arrival time at each microphone. This helps the system calculate where the voice comes from. The system then focuses on that direction and ignores other noise sources.

This method improves speech clarity, especially in open offices. Many enterprise systems integrate beamforming microphones directly into devices powered by an AI desktop PC. These systems work well for conference calls and hybrid meetings.

Participants hear speech clearly even if the speaker sits several feet away from the computer. This technology helps remote participants stay engaged during discussions.

6. Real-Time Audio Enhancement Improves Speech Quality

Smart audio AI also improves the voice quality itself. Human speech includes many frequencies. Some frequencies carry most of the meaning. Poor microphones often lose these frequencies. This makes speech sound flat or muffled.

Audio enhancement technology restores these details. AI systems analyze the incoming voice signal. They then strengthen important speech frequencies. This process improves clarity and warmth in the speaker's voice.

Real-time audio enhancement provides several benefits

- Stronger voice clarity

- Reduced distortion

- Better speech tone

- Improved listening comfort

These improvements happen instantly during meetings. The computing power inside an AI PC supports advanced audio processing models. These models refine voice signals without causing delay.

Conclusion

Online meetings have become a core part of modern work. Clear communication now depends on strong audio performance. Smart AI audio technology transforms how meeting sound works. It removes noise and cancels echo. It balances voice levels and enhances speech quality. Beamforming microphones capture sound direction while AI models improve clarity.

Modern computing hardware makes this possible. Systems powered by an AI desktop PC deliver the processing power needed for real-time audio intelligence.

As remote work continues to grow, smart audio technology will become even more important. Businesses and professionals rely on clear communication every day. AI-driven sound systems help meetings stay productive, engaging, and easy to understand.

Sign in to leave a comment.