Bounding box annotation helps self-driving cars detect and localize objects around them. This article explains why it's essential for safe, reliable autonomous navigation.

Self-driving cars are no longer a futuristic dream, they're being tested and used in real-world environments every day. But have you ever wondered how these vehicles "see" and understand the world around them? The answer lies in advanced AI, powered by carefully labeled data and one of the most critical techniques used in this process is bounding box annotation.

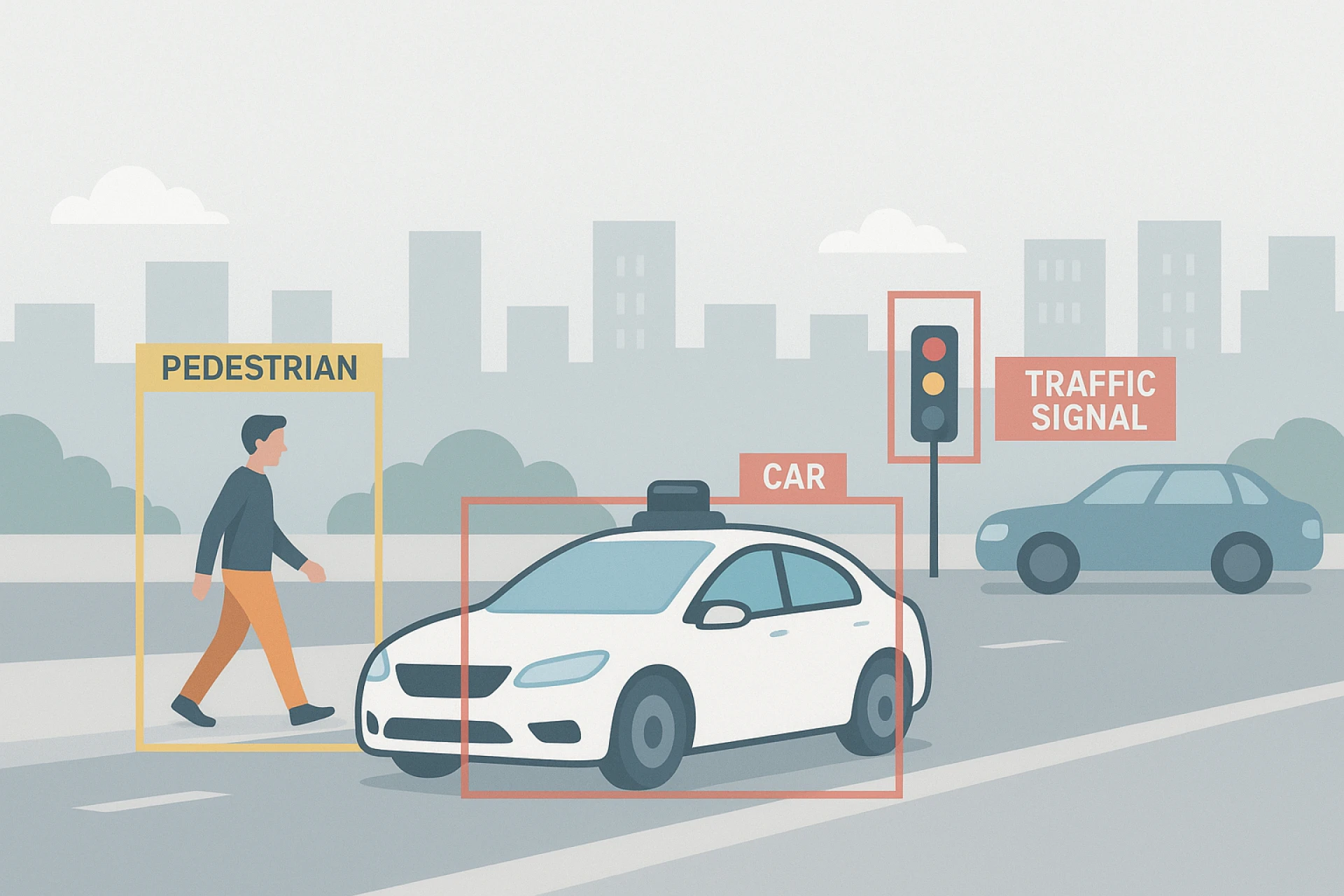

From detecting pedestrians to identifying traffic signs and other vehicles, bounding boxes play a foundational role in how autonomous vehicles perceive their surroundings. In this article, we will explore the importance of bounding box annotation in self-driving car technology, how it works, and why it's vital for safety, accuracy, and real-time decision-making.

Why Object Detection Is Critical for Autonomous Driving

For a self-driving car to operate safely, it must be able to:

· Detect objects (like cars, people, bicycles, animals)

· Understand their location and movement

· Predict how they’ll behave in the next few moments

· Make safe driving decisions in real time

This process relies heavily on object detection, which is where bounding box annotation comes in. By teaching AI models where and what objects are, bounding boxes become the foundation for virtually all visual understanding in autonomous navigation.

What Is Bounding Box Annotation?

Bounding box annotation involves drawing rectangles around objects of interest in images or video frames. These rectangles indicate the object’s position, size, and class (such as “pedestrian” or “vehicle”).

In the context of self-driving cars, bounding boxes are used to mark things like:

· Vehicles: Sedans, trucks, buses, motorcycles

· Pedestrians: People walking, standing, or crossing the street

· Traffic infrastructure: Stop signs, traffic lights, cones, lane markers

· Obstacles: Animals, fallen trees, debris on the road

Each labeled image becomes a data point that helps the vehicle’s AI system learn what to look for in real-world driving situations.

How Bounding Boxes Help AI “See” the Road

Let’s simplify the process:

· Data Collection: Cameras mounted on the vehicle capture video and still images as the car drives.

· Bounding Box Annotation: Human annotators draw boxes around key objects in these frames and label them accordingly.

· Model Training: These annotated images are used to train computer vision models to detect and track objects in real time.

· Real-Time Inference: Once trained, the AI uses similar visual input to make driving decisions on the fly like braking for a pedestrian or changing lanes.

Without accurate bounding box annotation at the training stage, the model wouldn't be able to recognize or locate objects reliably when driving autonomously.

Why Bounding Box Accuracy Matters in Autonomous Driving

When you are building a model that controls a moving vehicle, there is zero room for error. Inaccurate annotations can lead to:

· False positives (detecting something that isn't there)

· False negatives (missing a real object)

· Delayed reactions to hazards

· Unsafe decisions based on poor object recognition

That’s why bounding boxes must be:

· Precisely aligned with object edges

· Tightly fitted, not overly loose

· Consistently labeled across thousands of frames

Even a small annotation mistake in a high-stakes environment like urban driving can have serious consequences.

Key Object Classes Annotated with Bounding Boxes

Let’s look at some of the most critical object types commonly annotated in self-driving datasets:

· Pedestrians: Recognizing walking patterns, distances, and potential crossing behavior

· Vehicles: Tracking other cars, bikes, motorcycles, and heavy vehicles

· Traffic Lights & Signs: Understanding rules and signaling when to stop, go, or yield

· Unexpected Obstacles: Identifying animals, debris, or parked vehicles in no-parking zones

· Lane Markers & Road Edges (sometimes combined with bounding boxes and line annotations): Helping the vehicle stay in its lane or safely change lanes

Training vs. Testing: Bounding Boxes in the Development Cycle

Bounding box annotation is crucial not only during training but also during model testing and evaluation. By applying annotations to test data, engineers can measure:

· Detection accuracy

· Object localization precision

· Missed detections or overlaps

· Model confidence in various lighting and weather conditions

This feedback loop is essential for refining and improving self-driving performance over time.

Challenges Specific to Self-Driving Datasets

Self-driving car data is complex, and that makes bounding box annotation more challenging than standard image labeling tasks. Some of the unique issues include:

· Varying Lighting Conditions

Annotators must handle dusk, nighttime, glare, and shadows, which can obscure object boundaries.

· Weather Variability

Rain, fog, or snow may partially block the view, making consistent annotations more difficult but still necessary.

· Occlusions and Overlapping Objects

Bounding boxes must account for partially hidden pedestrians or vehicles, which require skilled human judgment.

· High Frame Volumes

Video data requires frame-by-frame annotation, and consistency across thousands of frames is key to accurate object tracking.

These challenges are exactly why outsourcing trained annotators with experience in self-driving projects is essential.

Bounding Boxes Enable Real-Time Decision-Making

Self-driving cars rely on object detection models to make split-second decisions. Bounding boxes allow the AI to:

· Track the movement of nearby vehicles

· Predict pedestrian behavior (e.g., crossing the street)

· Navigate through intersections

· Detect and respond to obstacles

· Recognize when to slow down or stop

In other words, bounding box annotation gives the AI context about its surroundings, which is essential for safe, real-time reactions.

The Role of Human Annotators in Continuous Learning

Self-driving models constantly evolve. As they’re deployed in new environments (new cities, weather, road conditions), they need updated data to stay accurate.

That means new bounding box annotations must be created regularly to:

· Add new object types or edge cases

· Improve model accuracy over time

· Adapt to changing regulations or infrastructure

A dedicated team of annotators ensures that this process remains consistent, high-quality, and scalable even as data volumes grow.

Final Thoughts

Bounding box annotation is not just a technical step in training self-driving cars, it’s the cornerstone of visual perception for autonomous systems.

Without accurate bounding boxes, a self-driving car would be unable to safely navigate the world, recognize obstacles, or make smart decisions. That’s why bounding box annotation must be done with precision, consistency, and domain knowledge.

As the self-driving industry continues to grow, so does the demand for high-quality annotated data. If your AI model depends on seeing the world clearly, bounding box annotation is where that clarity begins.

Sign in to leave a comment.