Data centers and enterprise environments frequently encounter I/O bottlenecks when data is distributed unevenly across physical drives. When certain drives process a disproportionate volume of read and write requests, the resulting performance imbalance causes elevated latency and accelerated hardware wear. Network Attached Storage systems utilize advanced file placement algorithms to resolve this exact hardware limitation.

By analyzing data access patterns and systematically routing files to the optimal physical locations, a modern NAS infrastructure ensures uniform resource utilization. Storage administrators rely on these mechanisms to maintain high throughput rates and prevent localized disk degradation. Understanding the underlying mechanics of file placement reveals exactly how these storage architectures sustain enterprise-grade performance.

The Mechanics of Disk Performance Imbalance

Uneven data distribution primarily occurs in NAS storage when hot data—files that users and applications access frequently—accumulates on a single drive or a limited cluster of drives within an array. Conversely, cold data remains stagnant on other disks, consuming capacity but requiring zero I/O operations.

This asymmetry forces specific spindles or flash modules to operate at maximum capacity while neighboring drives sit idle. The overloaded disks create a queuing delay for read and write requests. The entire network application stack suffers from latency spikes because the storage backend cannot execute commands concurrently across the available hardware pool.

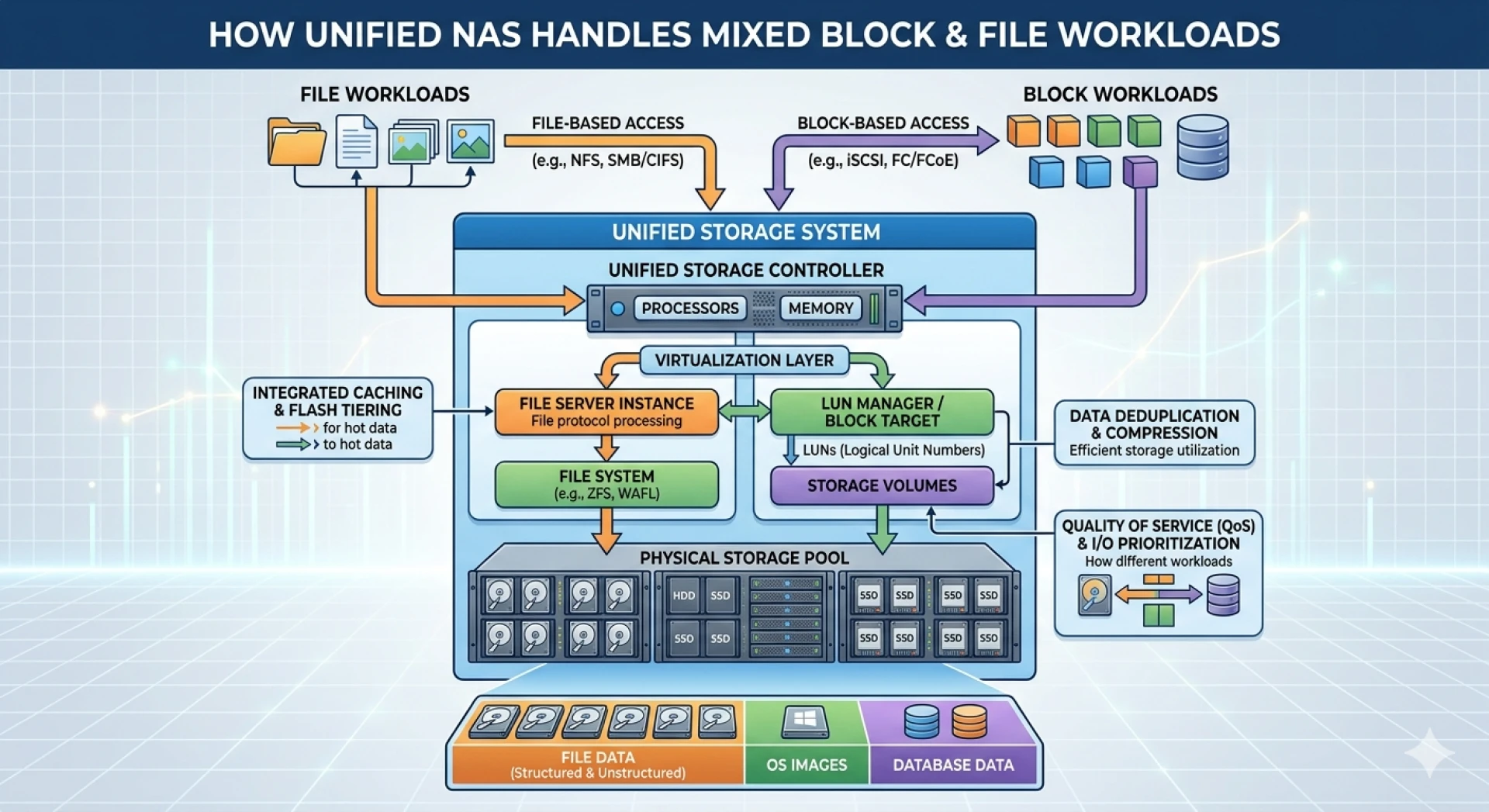

How a NAS Appliance Manages Data Distribution?

A dedicated NAS appliance actively prevents these chokepoints by controlling exactly where individual blocks and files reside at the hardware level. Instead of writing files sequentially to the first available drive, the system applies logical distribution frameworks.

Algorithmic Striping and Parity

RAID configurations and advanced file systems within a NAS appliance distribute data chunks systematically. Striping divides large files into smaller segments, writing them simultaneously across multiple drives. This means a single large read request pulls data from several disks at once, inherently balancing the load. Furthermore, parity data is rotated across the array, ensuring that the computational overhead required for redundancy does not disproportionately affect a single drive.

Automated Load Balancing

Modern NAS operating systems continuously monitor drive utilization metrics. If the controller detects an I/O imbalance, the system initiates background migration processes. It moves highly active files away from saturated drives to disks with lower current utilization rates. This dynamic reallocation happens transparently, preserving application uptime while restoring hardware equilibrium.

Integrating Hybrid Cloud Storage

On-premises hardware limits the total available spindle count for load distribution. Organizations frequently extend their local infrastructure by integrating cloud environments, utilizing resources like Azure disk storage to absorb heavy workloads.

By configuring a hybrid storage architecture, administrators can automate the migration of cold or archive data to cloud repositories. This clears capacity on the local NAS appliance, providing the local controller with more contiguous free space to execute its load-balancing algorithms effectively. Furthermore, shifting specific high-demand workloads entirely to Azure disk storage allows the local NAS to focus its processing power on latency-sensitive internal applications, thereby eliminating the risk of physical disk saturation on-site.

Strategic Advantages of Optimized File Placement

Systematic file distribution yields direct operational benefits for enterprise IT environments. Uniform disk utilization extends the lifespan of mechanical hard drives and solid-state drives by normalizing wear leveling. Hardware replacement cycles become predictable, reducing unexpected capital expenditures.

Additionally, balanced I/O operations guarantee consistent application performance. Database queries execute with predictable millisecond latency, and large-scale file transfers complete without stalling. The storage infrastructure becomes a reliable foundation rather than a variable bottleneck.

Sustaining Enterprise Storage Performance

Hardware limits dictate that no single disk can process infinite requests simultaneously. Systematically managing data placement remains the most effective method for extracting maximum performance from a storage array. By leveraging intelligent distribution algorithms, automated tiering, and cloud integration, organizations can maintain absolute equilibrium across their entire storage infrastructure, ensuring long-term reliability and uncompromised throughput.

Sign in to leave a comment.